Case Study - Multi-Source Retrieval Infrastructure with Access Boundaries

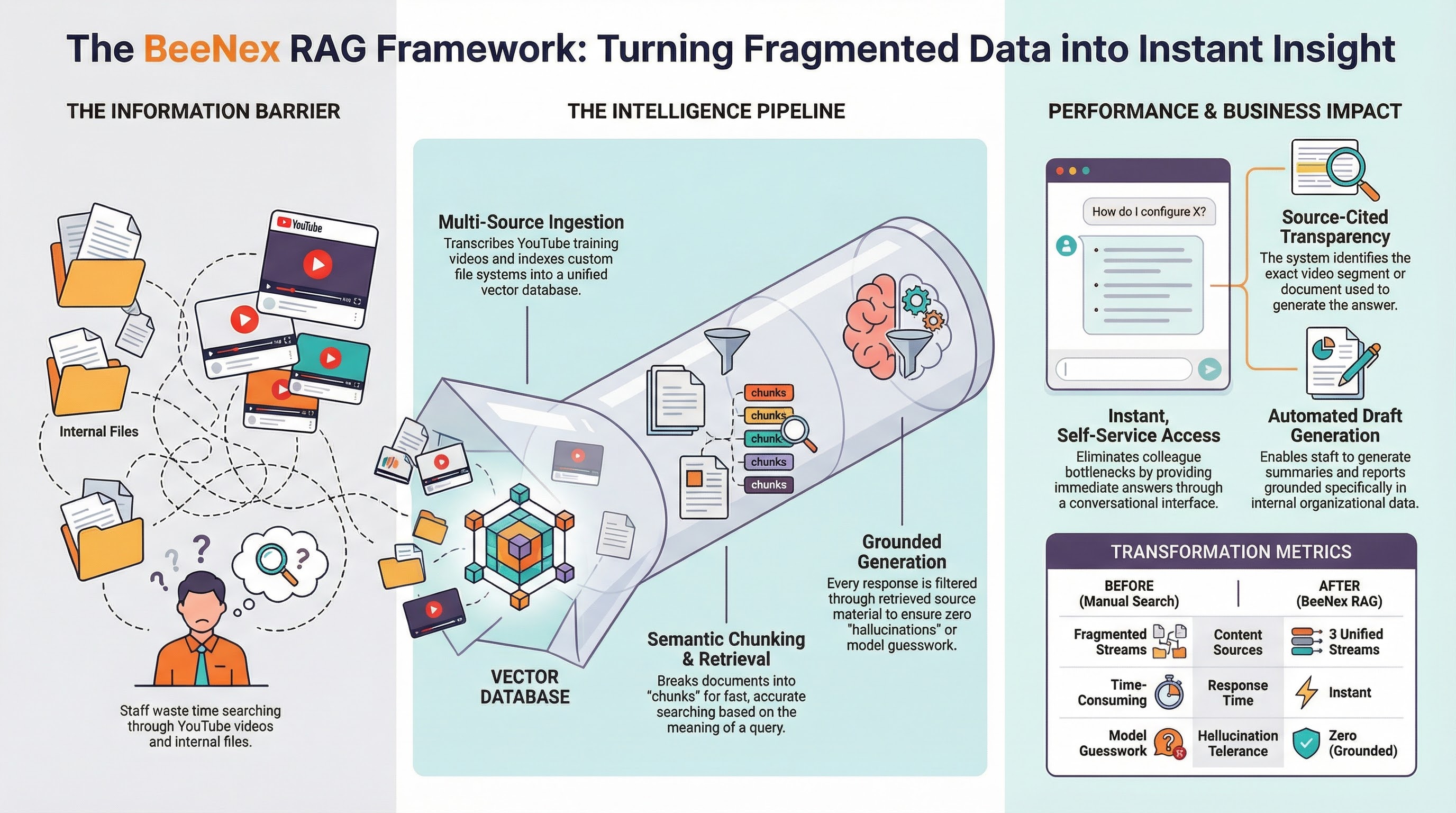

Engineering a multi-source retrieval infrastructure with boundary-enforced access policies that ingests YouTube content, custom document repositories, and institutional knowledge to provide policy-enforced, source-attributed answers for internal staff.

- Client

- Center for Child Counseling

- Year

- Service

- Retrieval Infrastructure, Access Boundary Enforcement, Cloud Deployment, RAG Pipeline Engineering

System Architecture Snapshot

- Data Layer — YouTube transcription, custom file system connector, document chunking

- Retrieval Layer — RAG pipeline with Vertex AI, vector database, semantic search

- Control Layer — Authentication, access boundary enforcement, source citation

- Interface Layer — Conversational interface with content generation capabilities

The Challenge

Staff frequently needed information spread across multiple internal sources — including YouTube training videos, custom document repositories, and institutional knowledge that lived in people's heads. Finding the right answer meant searching multiple places or asking colleagues, creating bottlenecks and inconsistent responses.

The organization needed a system that could surface the right answer from the right source — instantly, and with citation.

The Solution

BeeNex engineered an internal retrieval system powered by RAG (Retrieval-Augmented Generation) that ingests content from multiple sources and provides accurate, source-backed answers through a conversational interface.

YouTube Content Ingestion

The system transcribes and indexes video content so staff can search training materials and institutional videos by asking questions in natural language. No more scrubbing through hour-long recordings to find the answer to a specific question - the chatbot retrieves the relevant segment and cites the source.

Custom File System Connector

A connector pipeline indexes internal documents and knowledge base materials from the organization's file systems. Documents are chunked, embedded, and stored in a vector database for fast semantic search at query time.

RAG Pipeline for Grounded Answers

Every response is grounded in source material. The system retrieves relevant content before generating a response, reducing hallucination and ensuring answers are backed by actual organizational documents and videos - not model guesswork.

Content Generation

Beyond Q&A, the system helps draft responses and summaries based on indexed knowledge - enabling staff to generate first drafts of communications, summaries, and reports grounded in organizational data.

- Google Cloud Platform

- Vertex AI

- RAG Pipeline

- YouTube Content Ingestion

- Custom File System Connector

- Vector Database

- Content Generation

Results

- Content sources unified

- 3

- Source-cited answers for staff

- Instant

- Hallucination tolerance with RAG grounding

- Zero

- Knowledge access without colleague bottlenecks

- Self-service

The best support system doesn't just answer questions - it knows where the answer came from. Staff now get instant, accurate answers with citations, eliminating the need to search multiple systems or interrupt colleagues.